AI for everyone!

AI mandates, KPIs, and OKRs!

If you’re not using AI for everything, YOU are the problem.

I’m so very tired of people shouting from the rooftops that if you’re not immediately all in on AI that you’re a luddite, you don’t want progress, you want people to die because of missed advancements in science, or you’re going to be left behind.

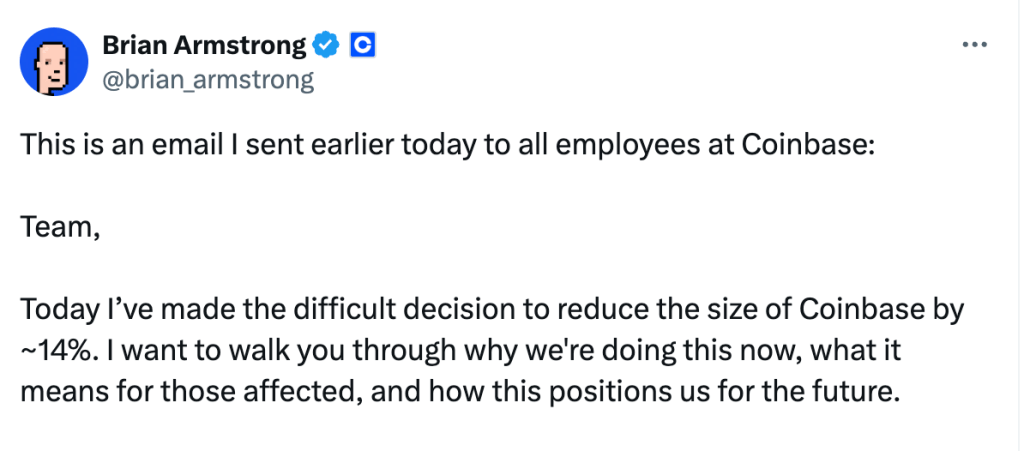

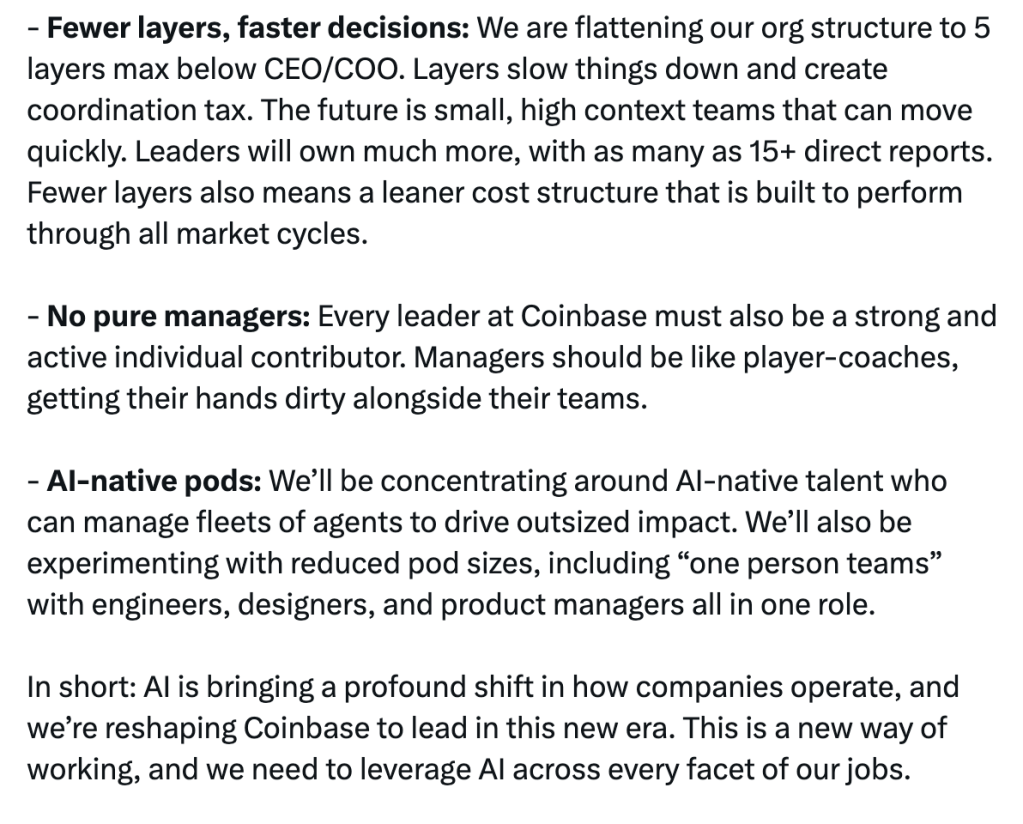

This week the Co-founder and CEO of Coinbase sent this memo to employees and posted it on X. His message also included this:

Here’s the deal, maybe this works for a company that can “return to the speed and focus of our startup founding, with AI at our core”. For large legacy companies, the government, or any highly regulated industry this is not going to happen. What this does do is create more fear for employees who have to wonder if this will happen to them.

I’m no CEO and I’ve never been in a chief level role so take this for what it is, my observation in working with companies across the Global Fortune 500, but I think these ideas are not compatible with how humans work.

In the point about fewer layers and faster decisions it’s noted leaders will have 15+ direct reports and be expected to move quickly with less coordination then in the no pure managers point managers are also expected to do as well as lead, the whole player/coach concept.

Given the average age people receive leadership training was 42 when they are often in supervisor roles by age 33 leaving a 10+ year gap with no leadership training and enablement. It was also found that “Prior research has shown that less than 10 percent of leaders, left to their own devices, will have any personal plan of development without the encouragement of some formalized process sponsored by their company.”*

So we expect leaders, who likely have zero to minimal formal training, to lead more people + the machines and to balance work and leadership. I’ve seen people with access to an abundance of leadership development resources still struggle to lead because leadership is part art and part science and when you’re torn between focusing on leading or doing the work your KPIs will tell you where you’ll spend most of your time.

Lately I’ve been noodling on the idea people keep sharing that “AI is not going to take your job, it’s someone who knows how to use AI that will”. I had the realization that this is a great statement if you want to gaslight people into believing that they are in competition with the person sitting next to them instead of examining the ways corporations are leveraging AI to keep you stuck in fear, uncertainty, and doubt and to have you believe that Frank from accounting is your biggest enemy in the fight for a job in the workforce of the future.

Here’s the deal. I don’t care what Brian at Coinbase choses to do with his company because I can acknowledge I am not in his shoes, I don’t talk to his board, and I don’t see the capabilities of his employees. Perhaps they really are ready for this future he describes and they have a foundation of psychological safety, trust, effective communication, and change management where they’ll be wildly successful. Perhaps they’ve found ways to move beyond utilization metrics around AI to focus on actual outputs and outcomes. Maybe their processes include enough time to also slow down, be strategic, and consider the ethical, social, and environmental impacts of their consumption and utilization of AI.

I’m not here to tell anyone to not use AI. I’m asking people to be invested in doing their own learning to understand where AI plays a role in your life and how your utilization of AI impacts those around you and the planet.

I’m asking you to not look at the people around you as the reason you’ll “get left behind” but to look at the systems in place that are telling you that it’s your friends and neighbors who will cost you your job. I’m not discounting that you may be in a role that AI could replace but the decision to replace you with AI is not likely coming from your peers who are also struggling to figure out AI.

I’m also asking you to look at AI as what it is. What we’re all being asked to use are Large Language Models (LLMs) that are predictive and nothing more. They work or don’t work based on the data and inputs. They are not creative, they’re not innovative, they don’t do anything on their own. We are not talking about AI/ML used in scientific research looking to cure cancer or fight diseases. Are you really doing better work with the LLMs or are you simply shortcutting your work at the expense of your ability to learn, retain information, and develop judgement and understanding?

If we as humanity decide the tradeoffs to people and the planet are worth it and if we are ok with people losing opportunities to learn and develop judgement, to stay employed, or to be a “productive member of society” we should get moving on what the future will look like for those who are displaced by these “AI-native pods” and “one person teams”. We should look at how we will prevent society from becoming completely unable to think for ourselves when we offload all of the cognitive work to AI that sounds smart but doesn’t make you smart. We should find ways to ensure people stay housed and fed and able to access healthcare when they are deemed unfit for working in the AI workplace.

We also should demand what equitable compensation looks like in the world Brian describes. If I, as a people manager, am expected to lead more people AND work as an IC and our outputs are potentially multiple times greater than before do I get paid significantly more to still work the same hours or do I get to work less hours and keep my pay the same while enjoying more time off? Does part time work suddenly become full time because that’s all you need to continue to output what’s expected of your role? The company shouldn’t be the only entity gaining profits. If people are doing more, increasing productivity, and increasing outcomes shouldn’t they also be compensated at a rate commensurate with the gains the company receives. Or are we just being duped into doing more with and for less…

I’m seeing pockets of interesting use where people are creating local LLMs that can run on your own computer without using cloud or data center resources. I’m seeing people ask the questions about the ethics of tools that were built on stolen information. I have seen people show up and protest having huge data centers built in their back yard at the expense of the environment and the people who have to live near them.

I also understand that the people deep on the AI bandwagon will look at this and tell me to stop being so utopian that this is the cost of doing business and that this has happened before in the industrial revolution and we’ll adjust. If you are ok living a life that enables you to know that people will be displaced and unable to care for themselves and their families and you see that as “the cost of doing business” you probably shouldn’t stick around here because I believe every person has inherent value whether they’re the CEO of the biggest company in the world or if they’re someone with significant disabilities that impair them from ever working. I believe if we create progress we should also progress how we care for our people. The current state of the US is showing us we have more than enough money for things like war but we are unwilling to put any of that into health, human, and environmental work that literally enables our society to function. If caring about people and the planet is too utopian for you I’m not your person. If we were talking about using AI to solve world hunger, to house everyone who wants to be housed, to help people more effectively break through substance abuse, or if we were truly curing cancer I think we can have a discussion about the tradeoffs of things like water use from data centers. I am personally not ok with dropping data centers all over the world to have more AI native companies show up selling things literally no one cares about or needs but we’re told we have to have it or else.

Yall most of the time these AI discussions are exhausting. We’re facing a future that wants us to blindly rush forward when we need to slow down and deeply consider what this means to us individually and as a society. I personally don’t want to be employed and “thriving” knowing people around me somehow are “unemployable” and then unable to feed and care for their families. Each of us is accountable for our decisions to go with the flow to not rock the boat or to get informed and choose differently. I don’t pretend to have all the answers or a path forward but I hope that people will at least engage in this conversation so we can CHOOSE our future instead of having the future be directed over us.

If you’ve made it this far, that’s pretty cool. I also recommend if you made it this far you should go outside and touch grass, do some yoga, or move your body in a way that resonates for you.

I believe that together we can build lives of meaning and purpose, where we can take advantages of technological advancements, AND where we can still take care of people and the planet because at the end it’s all we have. If you’re still here I hope you’ll join me in thinking through what AI means for you, where you fit into advocating or building the future that cares for all of us, and that you’ll take time for yourself to ensure you can continue on this journey wherever it takes us.

Leave a comment